Erro HTTP 499: O que é, por que o Nginx o registra e como corrigi-lo (2026)

Você verificou os logs do Nginx e viu uma parede de 499s. Boas notícias: na maioria dos casos, não é o seu servidor falhando. É o seu servidor registando o facto de que alguém desistiu. Aqui está o que isso significa, por que acontece e como corrigir, seja você um programador ou apenas alguém a tentar manter um site saudável.

Resposta rápida: Um erro 499 significa que o cliente, um navegador, aplicação móvel ou API, fechou a conexão antes do Nginx terminar de responder. O Nginx inventou este código para distinguir «falhei» (5xx) de «o cliente saiu antes de eu poder responder» (499). Não existe no padrão HTTP oficial (RFC 9110). Não é um subtipo de 502. A causa é quase sempre um backend lento ou uma incompatibilidade de timeout, não um servidor quebrado.

TLDR

499 = cliente fechou a conexão antes do Nginx responder, específico do Nginx, não está no padrão HTTP

Causa mais comum: servidor upstream (base de dados, API, aplicação) a demorar demasiado tempo

A correção depende da causa: otimizar o backend, ajustar proxy_read_timeout ou corrigir o alinhamento de timeout do CDN/proxy

499 ≠ 504, Num 504, o proxy desistiu; num 499, o cliente desistiu primeiro

Alguns 499s por dia é normal; um pico sustentado num endpoint é um sinal real

Proxies adicionam saltos de latência; um proxy lento ou com IP sinalizado empurra pedidos limítrofes para território 499

O que é o erro 499: Explicação simples + definição técnica

A analogia da espera telefónica é a forma mais clara de entender isto. Você liga para o serviço de apoio ao cliente. Está em espera. Após dois minutos, desliga. Do ponto de vista do sistema da empresa, a chamada conectou e um agente estava a tratar do seu caso, mas você desconectou antes de eles poderem responder. Isso é um 499.

O Nginx regista isto porque precisa de uma forma de dizer «estava a trabalhar nisto e o cliente saiu», o que é diferente de «falhei ao processar o pedido». O servidor estava bem. O cliente parou de esperar.

Três coisas que tornam o 499 único:

É exclusivo do Nginx:

Apache, IIS e outros servidores não usam este código.

Se alguém lhe disser para verificar erros 499 num servidor Apache, está errado.

A lista oficial de códigos de estado HTTP da MDN não inclui 499 porque nunca foi padronizado; o Nginx criou-o internamente para fins de registo.

Não é um 502:

Outros guias chamam o 499 de «um caso especial de 502 Bad Gateway».

Isto é factualmente incorreto.

Um 502 significa que o Nginx recebeu uma resposta inválida do upstream.

Um 499 significa que o cliente desconectou antes de qualquer resposta ser enviada.

Tratá-los da mesma forma leva-o à correção errada.

É geralmente um sintoma, não a causa:

O 499 no seu log é o evento.

A causa é o que quer que tenha tornado a resposta demasiado lenta, uma consulta à base de dados, uma chamada API externa ou um proxy com demasiada latência.

499 vs 504 vs 408: Numa vista rápida

Código | Quem desistiu | Significado | No padrão HTTP? |

499 | O cliente | Cliente fechou a conexão antes do servidor responder | Não, apenas Nginx |

504 | O proxy/gateway | Proxy expirou aguardando upstream | Sim |

408 | O servidor | O cliente foi muito lento ao enviar a requisição | Sim |

Se você vê um 504 no navegador, seu upstream está muito lento e seu proxy desistiu. Se você vê 499 nos logs do servidor, o cliente desistiu antes do seu proxy. Mesmo upstream lento, diferença em quem desconectou primeiro. [Leia sobre erro 520 aqui.]

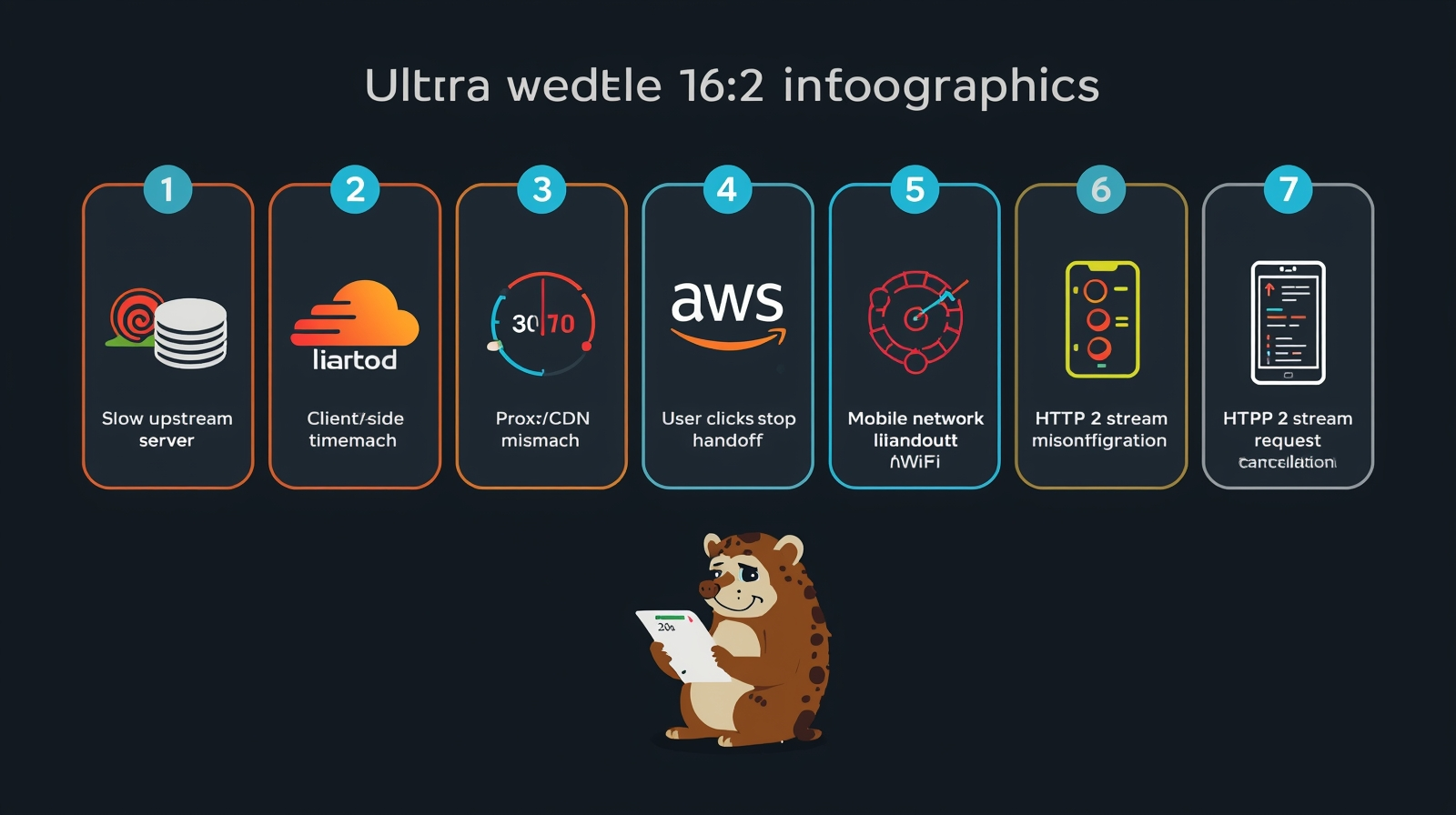

7 razões reais pelas quais você está vendo erros 499

Aqui está um resumo das razões possíveis mais comuns para o erro 499 aparecer:

1. Servidor upstream lento

Nginx faz proxy da requisição para seu app backend, banco de dados ou API. O backend está lento.

O cliente atinge seu próprio timeout e fecha a conexão. Nginx registra 499.

O backend pode ainda estar executando a consulta; só não tem ninguém para enviar o resultado.

Isso está por trás da maioria dos picos de 499 em produção.

2. Configurações de timeout do lado do cliente

Cada cliente HTTP tem seu próprio relógio de timeout. [Leia sobre HTTP vs. SOCKS]

Os padrões dos navegadores são generosos (Chrome permite vários minutos para carregamento de páginas). Mas clientes de API, Axios, Fetch, Python Requests e Curl frequentemente têm padrão de 30 segundos ou menos.

Uma requisição que leva 35 segundos falhará de forma consistente devido a um timeout do lado do cliente.

Aplicativos móveis são particularmente agressivos. Muitos implementam timeouts de 10–15 segundos para economizar bateria.

Isso gera 499s nos logs da API que parecem ser problemas do servidor, mas na verdade são problemas de configuração do cliente.

3. Incompatibilidade de timeout de proxy ou CDN

Cada camada na sua cadeia de requisições tem sua própria janela de timeout.

A documentação do Cloudflare observa especificamente que se um timeout do cliente for inferior a 38 segundos, o Cloudflare registra um código de status 499, mesmo que o servidor upstream esteja perfeitamente saudável.

Os ALBs da AWS têm seus próprios padrões de idle timeout (60 segundos por padrão) que podem causar o mesmo falso positivo.

Estes são 499s falsos; o erro aparece nos seus logs, o servidor está bem, mas uma camada de proxy registrou a impaciência do cliente.

4. Usuário clica em parar ou atualiza

Alguém acessa uma página lenta, clica em parar ou pressiona F5.

O Nginx registra 499. Isoladamente, isso não significa nada.

Dezenas por hora em um único endpoint significam que os usuários estão abandonando repetidamente aquela página, o que vale a pena corrigir, mas por razões de UX, não por razões de erro do servidor.

5. Troca de rede móvel

Um usuário muda de LTE para WiFi no meio de uma requisição.

A conexão TCP cai. O Nginx registra 499.

À medida que a adoção do HTTP/3 (QUIC) aumenta, isso está se tornando menos comum. As conexões QUIC sobrevivem melhor às trocas de rede do que o TCP porque seu estado de conexão está vinculado a um ID de conexão em vez de um endereço IP.

Mas se você está vendo 499s inexplicáveis de usuários móveis em endpoints HTTP/1.1 ou HTTP/2, esta é uma causa real.

6. Configuração incorreta da diretiva de timeout do Nginx

As quatro diretivas de timeout mais relevantes para 499s:

nginx

client_header_timeout 60s; # Wait for client to send request headers

client_body_timeout 60s; # Wait for client to send request body

proxy_read_timeout 60s; # Wait for upstream server response

fastcgi_read_timeout 60s; # Wait for PHP-FPM to respondO padrão de proxy_read_timeout é 60 segundos conforme a documentação do módulo proxy do Nginx.

Se o seu backend regularmente leva 90 segundos para operações específicas, exportações grandes, relatórios complexos, trabalhos em lote, você verá 499s sistemáticos nesses endpoints até ajustar o timeout para aquele local específico.

7. Cancelamento de stream HTTP/2

O HTTP/2 multiplexa várias requisições em uma única conexão TCP usando streams.

Se um cliente reinicia um stream específico, resposta lenta ou navegação do usuário, o Nginx registra um 499 para aquele stream enquanto outras requisições simultâneas na mesma conexão são bem-sucedidas.

Isso cria padrões inconsistentes de 499 que parecem aleatórios, mas na verdade são cancelamentos no nível do stream.

Um pico repentino após habilitar HTTP/2 não é uma coincidência.

Como corrigir o erro 499: De vitórias rápidas a avançadas

Para não desenvolvedores:

Se você é proprietário de um site vendo erros 499, o primeiro passo mais prático é identificar se eles estão concentrados em uma página ou espalhados por todo o site.

Se for um site de uma página, essa página tem um problema de desempenho. Se for em todo o site, seu ambiente de hospedagem pode estar com recursos insuficientes para sua carga de tráfego.

Para desenvolvedores:

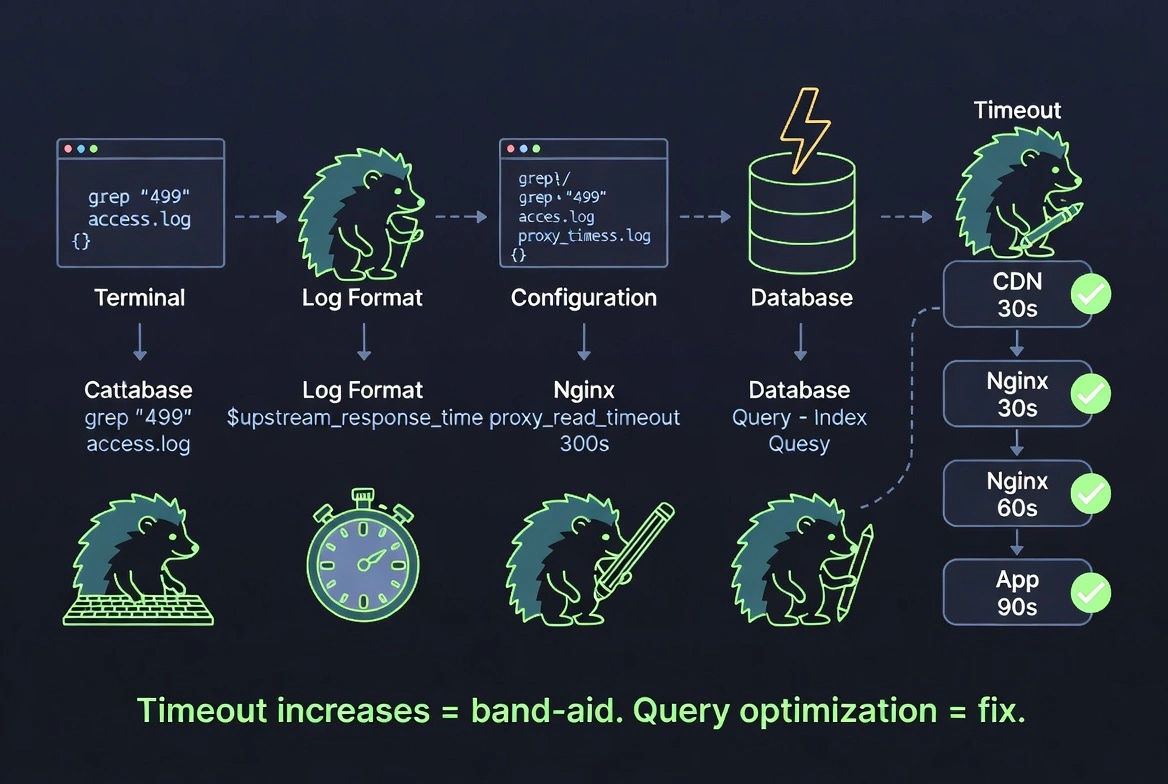

Passo 1: Descubra quais URLs estão gerando 499s:

bash

grep ' 499 ' /var/log/nginx/access.log | awk '{print $7}' | sort | uniq -c | sort -rn | head -20Passo 2: Meça o tempo de resposta do upstream.

Adicione $upstream_response_time ao formato de log do seu Nginx.

Se respostas próximas ao valor do seu proxy_read_timeout estão gerando 499s, o seu upstream precisa de otimização, não apenas de um aumento de timeout.

Passo 3: Aumente o timeout apenas para endpoints lentos específicos:

nginx

location /api/export {

proxy_read_timeout 300s;

proxy_pass http://backend;

}Não aumente globalmente, isso mascara o problema real.

Passo 4: Corrija o upstream.

Consultas lentas ao banco de dados, buscas sem índice e chamadas síncronas a API s externas são as causas reais.

Aumentos de timeout são paliativos.

Otimização de consultas, cache e processamento assíncrono são as correções.

Passo 5: Alinhe os timeouts da cadeia de proxy.

Sua cadeia de timeout deve ser incremental: aplicação upstream < Nginx < load balancer < CDN.

Se a sua CDN expira em 30 segundos e o Nginx permite 60 segundos, a CDN gerará 499s falsos antes que o Nginx tenha chance de responder.

Audite cada camada.

Passo 6: Para fluxos de automação;

Defina timeouts explícitos no cliente que correspondam à janela real de resposta do servidor.

Um script Playwright ou Puppeteer que usa um padrão de 30 segundos e acessa um endpoint de 45 segundos falhará todas as vezes.

Como os proxies do CyberYozh ajudam você a evitar erros 499 falsos

Quando proxies estão na sua cadeia de requisições, para scraping, automação ou fluxos de múltiplas contas, eles se tornam uma fonte adicional de latência. Um proxy com má reputação de IP, alta contenção de pool compartilhado ou incompatibilidade geográfica com o servidor de destino adiciona latência a cada salto.

Para requisições já próximas ao limite de timeout do cliente, a latência adicional é o que empurra uma requisição limítrofe além do limite para um 499. Esta é a contribuição da camada de proxy para 499s falsos, e é totalmente evitável.

Conexões consistentes e de baixa latência

Os proxies residenciais, mobile proxye de datacenter do CyberYozh mantêm tempos de conexão estáveis com servidores upstream.

Os picos de latência do tipo «vizinho barulhento» comuns em pools de proxy compartilhados de alta densidade, onde o tráfego de um usuário abusivo degrada os tempos de resposta de todos os outros, são eliminados em proxies dedicados.

Pré-verificação de reputação de IP antes de conectar

Um IP sinalizado ou limitado adiciona sobrecarga antes mesmo de sua requisição alcançar o destino.

CyberYozhverifica a reputação do IP antes de encaminhar o tráfego de produção através dele.

Um IP já limitado pelo destino retornará respostas mais lentas, empurrando requisições marginais para o território 499.

Correspondência geográfica de proxy

Encaminhar uma requisição de destino baseada nos EUA através de um proxy residencial europeu adiciona latência desnecessária.

As localizações globais do CyberYozh permitem corresponder a região do proxy à região do servidor de destino, minimizando o tempo de ida e volta.

Configuração de timeout compatível com automação

A API do CyberYozh integra-se com Playwright, Puppeteer e Selenium.

Você configura timeouts no nível da requisição para corresponder às janelas reais de resposta do servidor, não aos padrões da ferramenta definidos sem o seu destino específico em mente.

O que os proxies não podem corrigir:

Um servidor upstream genuinamente lento.

Se o backend leva 120 segundos e o timeout do cliente é 60, nenhum proxy resolve isso.

O CyberYozh remove a contribuição da camada de proxy para os 499s. Lógica de aplicação lenta é um problema separado.

Conclusão: Você deveria se preocupar com erros 499

Alguns por dia: normal. Não investigue.

Um pico concentrado em um endpoint: sinal real. Algo ficou mais lento: uma consulta ao banco de dados, uma chamada de API externa ou uma implantação recente. Encontre com $upstream_response_time e corrija a causa em vez de aumentar o timeout.

Um pico em todo o site: verifique sua infraestrutura. Hospedagem sob carga, serviços upstream degradando ou uma configuração incorreta de timeout de CDN está causando 499s falsos em geral.

Para qualquer pessoa executando proxies em seu fluxo de trabalho, a camada de proxy é frequentemente negligenciada. Um proxy lento, sinalizado ou geograficamente incompatível empurra silenciosamente requisições limítrofes para o território 499.

Uma infraestrutura de proxy limpa, dedicada e geograficamente apropriada remove completamente essa variável, então quando você vê um 499, sabe que é um problema real de backend e não um artefato de proxy. Cadastre-se no CyberYozh para uma infraestrutura realista.

bash

grep ' 499 ' /var/log/nginx/access.log | awk '{print $7}' | sort | uniq -c | sort -rn | head -20