How to Scrape Google Trends Data: The Complete Practical Guide (2026)

Why anyone would want to scrape Google Trends in 2026, before a single line of code, the "why" deserves a moment.

Google Trends is something genuinely rare on the internet:

a real-time, publicly accessible window into what millions of people are actively thinking about, searching for, and paying attention to.

Not surveys.

Not self-reported behavior.

Actual search queries, aggregated and indexed as relative interest signals, are updated continuously.

That's an extraordinary data source. The problem is the interface.

Google Trends' website is built for casual exploration. You can type in a keyword, look at a chart, and form a rough impression of how interest has moved.

What you cannot do, not through the website, is pull weekly interest data across 200 keywords simultaneously, monitor when emerging topics start spiking, compare geographic demand across 40 markets, or integrate Trends signals into an automated content or business intelligence pipeline.

Scraping solves exactly this gap. It takes a tool designed for individual curiosity and transforms it into a scalable data infrastructure.

TL;DR:

The most reliable way to scrape Google Trends data in 2026 is with pytrends, an unofficial Python library that interfaces with Google's internal Trends API, no API key required.

For higher-volume scraping or more complex requirements, browser automation via Playwright offers more flexibility.

Both methods require rotating residential proxies at a meaningful scale to avoid IP-based rate limiting.

Google Trends data is publicly available, legally accessible for analysis, and used daily by SEO professionals, content marketers, researchers, and financial analysts worldwide.

Who actually uses this at scale:

A Shopify store owner noticed through Google Trends that interest in "Stanley cup tumbler" started climbing steeply in October 2022, about six weeks before it became a cultural phenomenon.

She tripled her accessories inventory at cost. Her competitors, manually tracking the same trend, responded six weeks after the search spike had already peaked. She captured the surge in demand; they chased it.

That's what programmatic Google Trends access actually does for real people. The same logic applies to SEO keyword prioritization, editorial content calendars, financial sentiment analysis, academic research, and competitive intelligence.

The data is there. The question is whether you're accessing it systematically or incidentally.

Is it legal to scrape Google Trends

Direct answer: Scraping Google Trends is not illegal under the laws of the United States, European Union, United Kingdom, or most other major jurisdictions. It may conflict with Google's Terms of Service, which broadly prohibit automated access, but this is a contractual consideration, not a criminal one, and enforcement against individual researchers or analysts is effectively nonexistent.

Let's put this in context rather than leaving it vague:

The Computer Fraud and Abuse Act in the US, the Computer Misuse Act in the UK, and the GDPR in the EU all address unauthorized system access and the protection of personal data.

Scraping publicly available, anonymized aggregate data from Google Trends doesn't meaningfully implicate any of these frameworks.

A 2022 US Ninth Circuit ruling in HiQ Labs v. LinkedIn established that scraping publicly accessible data, data that doesn't require login or authentication to view, is not a violation of the CFAA.

Google's Terms of Service are a separate consideration. They are a contract between you and Google, not a law. Violating them can result in IP blocking or service termination, technical consequences, not legal ones.

The pragmatic framework used by professionals:

Throttle requests. Don't send hundreds of requests per minute. Reasonable automated access mimics human behavior.

Scrape what you need for analysis. Don't archive the entire dataset without a purpose.

Don't redistribute raw scraped data commercially. This is where licensing questions become real.

Use the data for insight. Content strategy, research, and business intelligence are the purposes for which Google Trends was designed to inform.

The overwhelming majority of SEO professionals, journalists, academic researchers, and data analysts who scrape Google Trends do so every day without incident. The risk is real in theory, negligible in practice for legitimate use cases.

Understanding what Google Trends data is

Before writing code, understanding what you're pulling matters because Google Trends data has a quirk that confuses beginners and frustrates intermediate users alike.

Google Trends doesn't show absolute search volumes:

It shows relative interest, a score from 0 to 100 where 100 represents the peak interest within your selected timeframe and geography.

50 means half of the peak.

0 means insufficient data to display.

This is deliberately normalized data.

Google doesn't release raw search volume counts through Trends.

What this means practically:

If you pull data for "electric vehicles" over 5 years, the peak week's score is 100.

Every other week, scores relative to that peak.

If you then pull a separate query for "solar panels" over the same period, its peak also scores 100, even though the absolute search volume for "solar panels" was only a tenth of that for "electric vehicles."

This is the normalization problem. It makes cross-query comparisons within a single data pull meaningful, but unreliable across separate data pulls.

The solution: Always compare keywords within the same pytrends build_payload() call. Including a consistent "anchor" keyword (one with stable, well-understood search volume) in every batch lets you normalize data across multiple pulls.

For example, including "weather", a keyword with consistent, predictable search volume, as an anchor in every batch allows you to calibrate relative interest comparably across separate requests.

Method comparison: Which scraping approach is right for you

Before diving into each method, here's the decision table:

Method | Difficulty | Reliability | Speed | Best Use Case | Main Limitation |

pytrends (Python library) | Beginner | High | Fast | Most standard use cases | Rate limits at volume |

Direct HTTP requests | Intermediate | Medium- High | Fast | Custom parameters, batching | Requires header /cookie management |

Browser automation (Playwright) | Advanced | Very High | Slow | Complex pages, avoid CAPTCHA | Resource intensive |

No-code platforms (Apify, etc.) | Beginner | Medium | Variable | Non-developers, quick exports | Ongoing subscription cost |

Manual CSV export | None | Perfect | Very Slow | One-time small dataset | Not scalable |

Start with pytrends. Move to browser automation only when pytrends consistently fails for your specific use case.

Method 1: Pytrends

Direct answer: pytrends is an unofficial Python library that communicates with Google Trends' internal data API. It requires no API key, installs in seconds, and handles the most common data extraction tasks with minimal code. It's the right starting point for virtually everyone.

What Pytrends is

pytrends (originally authored by user GeneralMills, now maintained by the open-source community) is available on both GitHub and PyPI.

It abstracts Google's internal Trends endpoints into clean Python function calls, handling authentication cookies, request formatting, and response parsing automatically.

It is not an official Google product. Google doesn't endorse it.

But it works reliably for the vast majority of standard use cases and has been in active use since 2015, with consistent community maintenance through 2026.

Installing pytrends: bash

pip install pytrends

Python 3.7 or later is required. That's the complete installation.

Your first data pull

This script retrieves weekly interest data for a single keyword over the past 12 months:

from pytrends.request import TrendReq

# Initialize — hl sets the language, tz sets the timezone offset

pytrends = TrendReq(hl='en-US', tz=360)

# Build the payload — specify up to 5 keywords here

pytrends.build_payload(

kw_list=['artificial intelligence'],

timeframe='today 12-m',

geo='' # Empty string = worldwide

)

# Pull interest over time

data = pytrends.interest_over_time()

print(data.head(10))Run this, and you'll receive a pandas DataFrame, a table with dates as the row index and weekly interest scores (0–100) as values. The isPartial column indicates weeks not yet fully indexed; filter it out for clean analysis:

data = data[data['isPartial'] == False]Comparing multiple keywords

One of pytrends' most powerful features is direct keyword comparison, up to five keywords simultaneously, normalized to the same 100-point scale:

from pytrends.request import TrendReq

pytrends = TrendReq(hl='en-US', tz=360)

pytrends.build_payload(

kw_list=['ChatGPT', 'Gemini', 'Claude AI', 'Copilot'],

timeframe='today 12-m',

geo='US'

)

data = pytrends.interest_over_time()

data = data[data['isPartial'] == False]

# Which platform has the highest average interest?

print(data[['ChatGPT', 'Gemini', 'Claude AI', 'Copilot']].mean())This comparative data is genuinely strategic. If you're creating content in the AI tools space, knowing which platform's search interest is growing versus plateauing tells you where audience attention is actively migrating, before that shift is visible in any other data source.

Geographic interest data

Where in the world is interest concentrated? This pulls country-level data:

pytrends.build_payload(

kw_list=['electric vehicles'],

timeframe='today 12-m'

)

# Country-level interest

by_country = pytrends.interest_by_region(resolution='COUNTRY', inc_low_vol=True)

# Sort by interest descending

print(by_country.sort_values('electric vehicles', ascending=False).head(20))

For regional resolution within a single country (US states, UK regions), adjust the resolution parameter:

by_state = pytrends.interest_by_region(resolution='REGION', geo='US')This is invaluable for businesses making geographic expansion decisions or advertisers allocating regional budgets.

Rising related queries: The most underused feature

This is where Google Trends becomes a genuine content intelligence tool:

python

pytrends.build_payload(

kw_list=['remote work tools'],

timeframe='today 3-m'

)

related = pytrends.related_queries()

# Rising queries — growing fastest relative to their usual volume

print(related['remote work tools']['rising'])

# Top queries — highest absolute interest

print(related['remote work tools']['top'])Rising related queries show what people are searching for alongside your keyword, which is growing fastest in volume. For content marketers, this is three to six weeks of early-mover keyword intelligence. Publish on a rising related query before it reaches peak interest, and your content is positioned for the demand curve rather than chasing it.

Real-time trending searches

For monitoring what's trending right now:

trending_today = pytrends.trending_searches(pn='united_states')

print(trending_today.head(20))This returns the top 20 trending searches in the specified country at the time of the request. Useful for news monitoring, content reactivity strategies, and social media trend identification.

Method 2: Direct HTTP requests

Direct answer: Direct HTTP requests give you granular control over query parameters, headers, and session management that pytrends abstracts away. This is appropriate for advanced users who need custom timeframe formats, precise category filtering, or high-volume batch request management.

Under the hood, pytrends makes GET requests to Google's internal Trends endpoints, primarily:

https://trends.google.com/trends/api/widgetdata/multiline

https://trends.google.com/trends/api/explore

These endpoints return JSON data preceded by a protection prefix ()]}') That must be stripped before parsing.

They also require specific session cookies and headers that Google's server validates.

Here's a working pattern using the requests library:

import requests

import json

session = requests.Session()

# First, establish a session by visiting the main Trends page

session.get('https://trends.google.com/')

# The explore endpoint generates widget tokens for data requests

params = {

'hl': 'en-US',

'tz': '-360',

'req': json.dumps({

"comparisonItem": [

{"keyword": "python programming", "geo": "", "time": "today 12-m"}

],

"category": 0,

"property": ""

}),

'token': '',

'user_type': ''

}

response = session.get(

'https://trends.google.com/trends/api/explore',

params=params

)

# Strip the protection prefix before parsing

clean_response = response.text.lstrip(")}']\n")

data = json.loads(clean_response)This is more complex than PyTrends but gives you precise control over every aspect of the request. Most users don't need this level of control, but for production pipelines with specific batching requirements or non-standard timeframe formats, it's the appropriate approach.

Method 3: Browser automation with Playwright

Direct answer: Playwright automates a real browser, making your scraping behavior virtually indistinguishable from genuine human browsing. It's the most robust method to avoid rate limits and CAPTCHA, but it's significantly slower and more resource-intensive than API-based approaches.

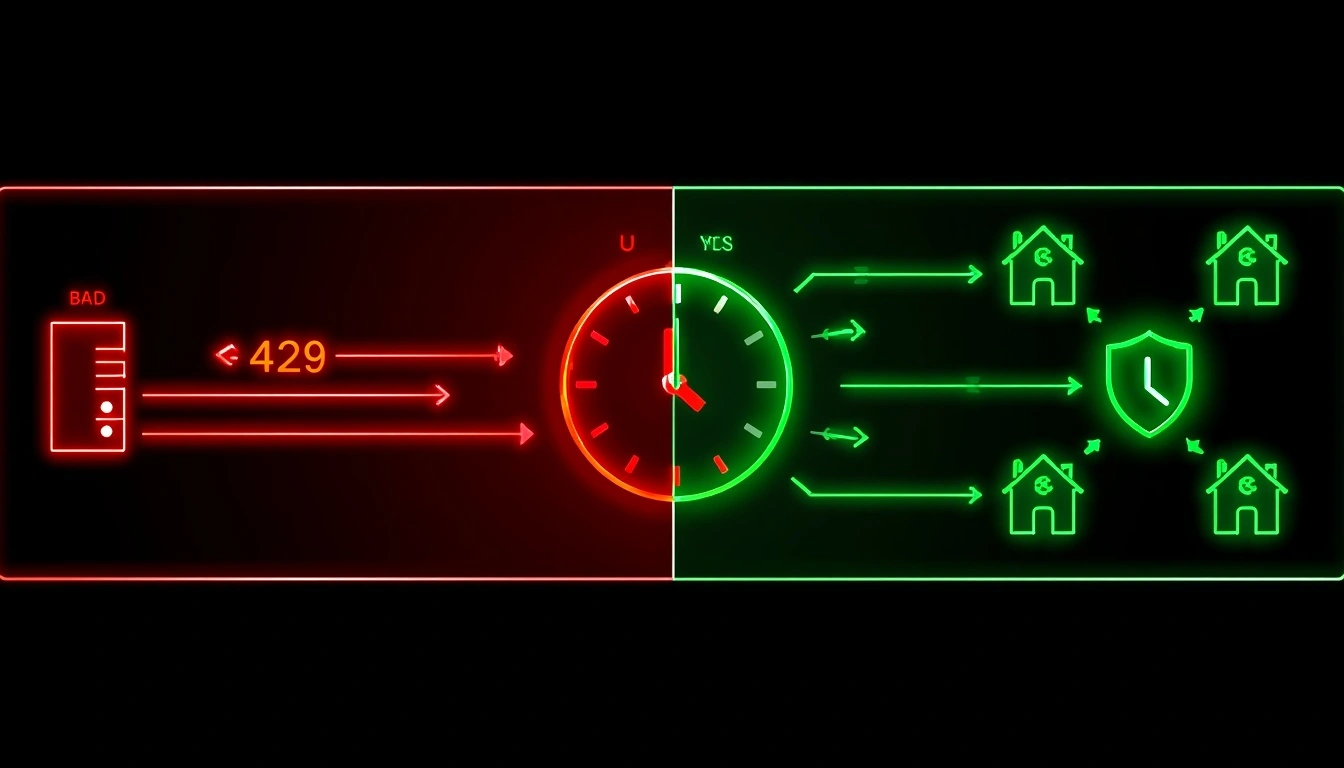

When to use Playwright instead of pytrends:

pytrends is returning consistent 429 errors, even with proxies and delays

You need to capture visual or interactive page elements not exposed by the API

You need to handle CAPTCHA challenges (with appropriate third-party solvers)

You're scraping niche Trends pages with complex navigation patterns

A reliable Playwright setup:

from playwright.sync_api import sync_playwright

import time

with sync_playwright() as p:

# Launch browser — headless=False for debugging, True for production

browser = p.chromium.launch(headless=True)

# Create a context with realistic browser settings

context = browser.new_context(

user_agent='Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36',

viewport={'width': 1280, 'height': 800}

)

page = context.new_page()

# Navigate to Trends

page.goto('https://trends.google.com/trends/explore?q=python+programming&date=today+12-m&geo=US')

# Wait for the data chart to render

page fully

.wait_for_selector('[class*="widget"]', timeout=10000)

# Add human-like delay

time.sleep(3)

# Extract page content for parsing

content = page.content()

# Alternatively, intercept the API response directly

# This is more reliable than parsing HTML

browser.close()

print("Data extracted successfully")A more sophisticated approach intercepts the network requests Playwright makes while loading the page, capturing the API response directly rather than parsing rendered HTML. This combines the stealth advantages of browser automation with the cleaner data format of API responses.[Read about playwright Python documentation]

Why rate limits happen and how to handle them

This is the section most tutorials omit, and it's why beginners get stuck after their first few successful requests.

Google Trends has IP-based rate limiting. Send too many requests in a short timeframe from a single IP address, and you'll start receiving 429 errors (Too Many Requests). On some configurations, you'll receive a CAPTCHA challenge instead. Neither means your code is broken; it means you've triggered Google's automated traffic management.

Solution 1: Add request delays

The simplest fix. A time.sleep() call between requests allows Google's systems to reset their per-IP counter:

import time

from pytrends.request import TrendReq

pytrends = TrendReq(hl='en-US', tz=360)

keywords = [

'content marketing', 'SEO strategy', 'link building',

'technical SEO', 'keyword research', 'on-page SEO'

]

results = {}

for keyword in keywords:

pytrends.build_payload([keyword], timeframe='today 12-m')

results[keyword] = pytrends.interest_over_time()

print(f"✓ {keyword}")

time.sleep(15) # 15 seconds between requests

print("All keywords retrieved.")For batch jobs with up to 50 keywords per session, 10–15-second delays are typically sufficient. For larger batches, increase to 30–60 seconds and split across multiple sessions.

Solution 2: Rotating residential proxies

For higher-volume automation, IP rotation is the correct architectural solution. Each request routes through a different IP address, so no single IP address accumulates enough requests to trigger rate limiting.

Not all proxies are equal for this use case.

Google maintains extensive lists of data center IP ranges: AWS, Google Cloud, DigitalOcean, Vultr, and rate-limits traffic from these sources far more aggressively than traffic from residential addresses.

Using a data center proxy for Google scraping often provides no meaningful protection at all.

Residential proxies route traffic through IP addresses assigned to real ISP customers, home internet connections, and mobile devices.

To Google's systems, this traffic is indistinguishable from genuine users.

Using CyberYozh proxies for stable Google Trends scraping

Direct answer: Residential proxies prevent Google Trends rate limiting by distributing requests across thousands of different residential IP addresses. CyberYozh offers rotating residential and mobile proxies with geo-targeting capabilities, particularly well-suited for Google Trends automation.

Integrating CyberYozh proxies into a pytrends workflow:

from pytrends.request import TrendReq

import time

import random

# CyberYozh proxy configuration

proxy_config = {

'https': 'https://USERNAME:PASSWORD@gate.cyberyozh.com:PORT'

}

# Initialize pytrends with proxy support

pytrends = TrendReq(

hl='en-US',

tz=360,

requests_args={

'proxies': proxy_config,

'verify': True

}

)

keywords = ['machine learning', 'deep learning', 'neural networks',

'computer vision', 'natural language processing']

for keyword in keywords:

pytrends.build_payload([keyword], timeframe='today 12-m', geo='US')

data = pytrends.interest_over_time()

print(f"Retrieved: {keyword} — {len(data)} weeks of data")

time.sleep(random.uniform(8, 20)) # Randomize delays to mimic human behaviorThe random .uniform(8, 20) delay randomization is important; predictably uniform intervals (exactly 10 seconds every time) can themselves be identified as automated behavior by sophisticated traffic analysis.

Geographic targeting for Trends data:

One often-overlooked application of proxy geo-targeting is pulling Trends data that reflects genuine local search behavior.

Google Trends calibrates relative interest based on the requesting geography in some configurations.

A US residential IP requesting data for a US geo parameter produces more consistent results than a non-US IP does.

CyberYozh's country-level IP targeting supports this use case natively, useful for agencies running multi-market Trends analysis or researchers needing geographically representative data.

Check out CyberYozh proxy catalog for the most affordable and trustworthy IPs globally.

For Playwright-based scraping, the proxy configuration is slightly different:

python

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch(

headless=True,

proxy={

"server": "https://gate.cyberyozh.com:PORT",

"username": "USERNAME",

"password": "PASSWORD"

}

)

# ... rest of automation codeThe normalization problem: How to compare data across multiple pulls

This is the issue that trips up intermediate users the most, and it's rarely explained in competitor tutorials.

Google Trends normalizes data within each query.

The highest-interest point in your specified timeframe is always 100.

This makes sense for visualizing a single keyword's trajectory.

It creates a problem when you want to compare two keywords that were pulled in separate requests.

Scenario: You pull data for "keyword A" and get a peak of 100 in March. You then pull data for "keyword B" separately, and also get a peak of 100 in March. Does that mean they had equal search volume? Almost certainly not. They just happened to be at their respective peaks.

The anchor keyword normalization technique:

Include a stable reference keyword in every batch, one with well-understood, consistent search volume. Commonly used anchors include "Facebook," "YouTube," or "weather", keywords that maintain relatively stable search interest month-to-month.

from pytrends.request import TrendReq

import pandas as pd

import time

pytrends = TrendReq(hl='en-US', tz=360)

# Batch 1: Compare keyword sets against anchor

batch_1 = ['Facebook', 'content marketing', 'email marketing', 'social media marketing']

batch_2 = ['Facebook', 'SEO', 'PPC advertising', 'influencer marketing']

pytrends.build_payload(batch_1, timeframe='today 12-m', geo='US')

data_1 = pytrends.interest_over_time()[batch_1]

time.sleep(15)

pytrends.build_payload(batch_2, timeframe='today 12-m', geo='US')

data_2 = pytrends.interest_over_time()[batch_2]

# Now normalize both datasets against the anchor (facebook)

# The anchor's values are comparable across batches because they were in both

anchor = 'facebook'

data_1_normalized = data_1.div(data_1[anchor], axis=0)

data_2_normalized = data_2.div(data_2[anchor], axis=0)

# Now you can meaningfully compare any keyword from batch 1 with any from batch 2

print("Normalized comparison:")

print(pd.concat([

data_1_normalized.drop(columns=[anchor]),

data_2_normalized.drop(columns=[anchor])

], axis=1).mean())

Professional data analysts use this technique, and it is rarely documented in introductory tutorials. It unlocks the ability to compare dozens of keywords across multiple batch requests with meaningful, comparable results.

Real-world use cases: What you actually do with this data

Data without application is just an interesting spreadsheet. Here's what a meaningful Google Trends analysis looks like in practice:

Content calendar optimization

Pull rising related queries weekly for your core topic clusters.

When a related keyword crosses 40+ on the 0–100 scale and continues climbing, publish a focused piece on that topic immediately.

Target the growth curve, not the peak.

Seasonal inventory and ad spend planning

Five years of weekly interest data reveal seasonal patterns with remarkable reliability.

A retailer selling outdoor furniture can see precisely when spring interest begins climbing in their target markets, typically 4–6 weeks before purchase behavior peaks, and time their paid campaigns accordingly.

SEO keyword prioritization

Before investing in a content campaign for a target keyword, verify its trajectory.

A keyword with 30,000 monthly searches and a rising Trends trajectory is a better investment than one with 50,000 searches and a declining trajectory.

You're buying into a growing market rather than a shrinking one.

Competitive brand intelligence

Track your competitors' branded search interest over time.

Sustained growth in a competitor's branded searches is an early-warning signal, more informative than their published metrics and available in real time.

Financial sentiment indicators

Search interest in categories such as "refinancing mortgage," "recession," "layoffs," and "side hustle" serves as a leading indicator of consumer sentiment.

Major hedge funds and economic research institutions use Google Trends as one input in broader economic forecasting models.

Academic and journalism research

Quantifying public attention to news events, policy changes, public figures, and cultural moments over time provides verifiable, citable evidence of collective interest patterns that is more objective than editorial judgment.

Exporting and visualizing Google Trends data

Once you have data, the most common next steps are export and visualization:

from pytrends.request import TrendReq

import matplotlib.pyplot as plt

import pandas as pd

pytrends = TrendReq(hl='en-US', tz=360)

pytrends.build_payload(

['AI tools', 'automation software'],

timeframe='today 5-y',

geo='US'

)

data = pytrends.interest_over_time()

data = data[data['isPartial'] == False]

# Export to CSV for spreadsheet analysis

data.to_csv('google_trends_export.csv')

print("CSV exported successfully.")

# Create a clean visualization

fig, ax = plt.subplots(figsize=(14, 6))

data['AI tools'].plot(ax=ax, label='AI Tools', color='#2563EB', linewidth=2)

data['automation software'].plot(ax=ax, label='Automation Software', color='#7C3AED', linewidth=2)

ax.set_title('Search Interest Comparison: AI Tools vs Automation Software (5 Years, US)',

fontsize=14, fontweight='bold', pad=20)

ax.set_ylabel('Interest Score (0–100)', fontsize=11)

ax.set_xlabel('')

ax.legend(fontsize=11)

ax.grid(True, alpha=0.3)

ax.set_ylim(0, 110)

plt.tight_layout()

plt.savefig('trends_comparison.png', dpi=150, bbox_inches='tight')

plt.show()For teams that prefer spreadsheets to Python, the CSV export is fully compatible with Excel and Google Sheets. The pandas DataFrame structure maps cleanly to tabular formats, with dates in column A and keyword interest values in subsequent columns.

Troubleshooting the most common errors and how to fix them

Error: 429 Too Many Requests You've exceeded Google's per-IP rate limit. Add or increase time.sleep() delays between requests (start with 15–30 seconds). For persistent 429 errors at any delay level, implement rotating residential proxies.

Error: ResponseError 500. Usually, it's a temporary server issue on Google's end. Wait 5–10 minutes and retry. Occasionally triggered by malformed payload parameters, double-check your timeframe string format ('today 12-m', 'today 3-m', '2022-01-01 2023-12-31').

Empty DataFrame returned. Your keyword has insufficient search volume for the specified geography or timeframe. Try broadening the geo parameter set to an (empty string for worldwide) or extending the timeframe. Some highly specific long-tail keywords simply have insufficient data to return results.

CAPTCHA challenge triggered, Google suspects automated access. Switch to residential proxies, substantially increase delays, and reduce request volume per session. Browser automation with Playwright is more resistant to CAPTCHA triggers than direct API requests.

pytrends installation fails. Ensure Python 3.7+ is installed: python --version. Try upgrading pip first: pip install --upgrade pip, then pip install pytrends.

Partial data only (isPartial = True for most rows). The most recent 2–3 days of Trends data are always partial; Google hasn't fully indexed them yet. Filter these rows out: data = data[data['isPartial'] == False].

Cross-batch normalization gives inconsistent results. See the anchor keyword normalization section above. Always include a stable reference keyword in every batch to enable meaningful cross-batch comparison. [Read more about error 499 and error 520]

Curated GitHub resources

Rather than "search GitHub and you'll find something useful," here are specific resources worth bookmarking:

pytrends official repository: The canonical reference for method documentation, known issues, and changelog. The Issues tab contains solutions to the vast majority of edge-case errors.

Trending searches dashboard projects: several community-maintained repositories build on pytrends to create real-time trending keyword dashboards. Search "pytrends dashboard" on GitHub for the most recently maintained options.

Trends data normalization utilities: repositories specifically addressing the cross-batch normalization problem discussed above. Search for "Google Trends normalization Python" to find the most-starred options.

Apify Google Trends scraper: For non-developers who need Google Trends data without writing code, Apify's maintained Google Trends actor provides a no-code interface with scheduled runs and data export. Costs vary by usage volume.

Build the pipeline once, use it forever

Google Trends is one of the few genuinely free, genuinely powerful data sources that most people use at only 10% of its potential. A one-time investment in setting up a pytrends pipeline, even a basic one running weekly on a schedule, gives you a continuous early-warning system for rising topics, shifting audience interest, and emerging competitive dynamics.

Set up the basics today. Add proxy infrastructure as your volume grows. And start treating public search behavior as the strategic intelligence asset it actually is, rather than something you check manually when you remember to.

The data updates continuously. Whether you're paying attention to it is the only variable you control.